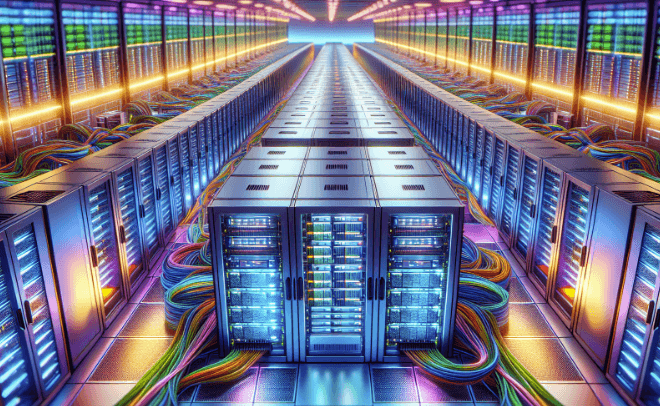

Hyperscale data centers are purpose-built for massive scale, efficiency, and automation. They use standardized, modular architectures and centralized control to accelerate provisioning and stabilize costs. Interoperable hardware, software, and orchestration enable rapid growth across global networks. Edge cooling and silicon efficiency sustain operations as demand expands. Governance and sustainability guide supply chains, auditing, and risk management. The result is reliable infrastructure that supports continuous innovation, while leaving questions about implementation and impact to be considered next.

What Makes Hyperscale Data Centers Different

Hyperscale data centers deviate from traditional facilities by prioritizing scale, efficiency, and automation as core design principles. They emphasize standardized, repeatable architectures, modular fabrication, and centralized control to enable rapid provisioning and predictable costs.

Governance prioritizes vendor compliance and rumor management, ensuring transparent supplier relationships and credible communication. This disciplined approach supports scalable growth without sacrificing security or reliability.

Core Technologies Powering Hyperscale

Core technologies powering hyperscale facilities center on standardized, modular components and automated management. Systems emphasize scalability through interoperable hardware, software, and orchestration, enabling rapid deployment and resilient operations. Edge cooling optimizes temperatures at scale, reducing energy waste and enabling dense layouts. Silicon breakthroughs drive efficiency and performance, unlocking higher processing capabilities without proportional power increases, sustaining sustainable growth across global data ecosystems.

Designing for Scale: Architecture and Operations

How should a scale-ready design balance modular architecture, automated operations, and resilient workflows to sustain rapid growth? Architecture emphasizes modularity, standardized interfaces, and scalable fabrics, enabling predictable deployment.

Operations prioritize automation, telemetry, and fault isolation. Reliable cooling and flexible routing underpin performance, while governance enforces repeatable processes. The result is a principled, scalable system that supports freedom through robust, low-friction expansion.

Challenges and Opportunities in Hyperscale Deployments

In hyperscale deployments, the path from design to operation is defined by a disciplined balance of scale, resilience, and efficiency, with challenges that arise from complexity, coordination, and long lifecycle requirements.

The narrative centers on sustainability metrics and supply chain resilience, linking governance to measurable outcomes.

Opportunities emerge through standardized platforms, modular inventories, transparent auditing, and proactive risk management across global, interconnected operations.

Frequently Asked Questions

What Are the Cost Drivers in Hyperscale Data Centers?

Cost drivers in hyperscale data centers include capital expenditures, cooling and power efficiency, robust networking, scalable hardware, automation, and ongoing maintenance; these hyperscale costs scale with workload, footprint, and uptime demands, guiding principled, systematic, freedom-oriented optimization strategies.

How Is Energy Efficiency Measured at Scale?

“Energy efficiency at scale is measured by standardized metrics and continuous monitoring.” The analysis presents energy efficiency and scale measurement as structured, repeatable processes, enabling principled, systematic, scalable decisions for an audience seeking freedom in optimized infrastructures.

What Governance Controls Exist for Hyperscale Security?

Governance controls for hyperscale security exist as layered, auditable frameworks, encompassing policy, risk assessment, access management, and continuous monitoring. They enable scalable, principled defense while preserving autonomy and freedom, ensuring consistent enforcement across complex, distributed infrastructure.

How Do Hyperscale Operators Handle Data Sovereignty Concerns?

Data sovereignty is addressed through jurisdictional controls and contractual redress, with emphasis on data localization where required. Hyperscale operators implement configurable regional handling, compliant data routing, and auditable practices to preserve freedom while upholding legal boundaries and trust.

See also: microtechinfo

What Are the Retirement and Decommissioning Practices for Assets?

Retire and decommission assets through formal, documented processes. The approach emphasizes retirement planning and adheres to decommissioning timelines, ensuring systematic asset disposal, data sanitization, and auditable records, enabling scalable, principled freedom while minimizing risk and environmental impact.

Conclusion

In sum, hyperscale data centers embody principled, scalable engineering: modular design, interoperable components, and centralized automation converge to deliver predictable costs and rapid innovation. Consider a single, synchronized relay race: each teammate—servers, networking, cooling, software—depends on precise timing and clear handoffs to win at scale. A 10x improvement in efficiency or provisioning speed is not luck but architecture, governance, and risk-aware operations working in concert. The result is reliable growth with measurable sustainability outcomes.